MANOVA Post-Hoc Analysis: Univariate Follow-Up Tests in R

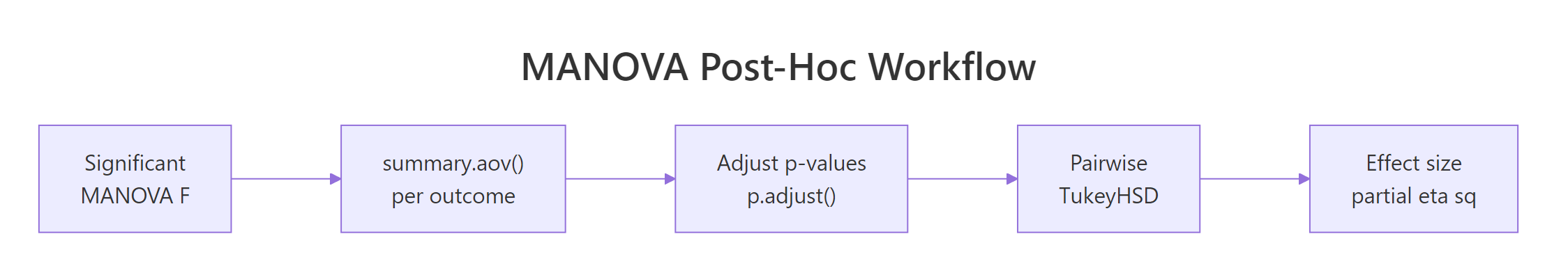

After a significant MANOVA, the multivariate F tells you the groups differ on the joint outcome bundle, but it does not say which outcomes drive the difference or which group pairs are responsible. Post-hoc analysis answers both questions. The standard recipe runs one univariate ANOVA per outcome, corrects the family-wise error rate, then runs pairwise contrasts within each significant outcome.

Why do you need a post-hoc step after a significant MANOVA?

A multivariate F lights up when the bundle of outcomes separates the groups, but it leaves the practical questions open. Which outcome carries the signal? summary.aov() answers that in one line by re-fitting one ANOVA per outcome from the same model object. The F values rank the outcomes by how strongly each one separates the groups, and that ranking guides every later step in the post-hoc workflow.

All four outcomes are individually significant, but the F values tell the real story. Petal.Length (F = 1180) and Petal.Width (F = 960) overshadow Sepal.Length (F = 119) and Sepal.Width (F = 49) by a factor of ten or more. That ranking is what the multivariate test was hiding inside the single Pillai number, and it is the first piece of information you should report.

Try it: Fit a smaller MANOVA on iris using only Sepal.Length and Petal.Length. Save it as ex_man2, then call summary.aov() to print two ANOVA tables.

Click to reveal solution

Explanation: Trim cbind() to two columns. The F values for the two retained DVs match the four-DV fit exactly, because each univariate ANOVA only uses its own column.

How do you correct for multiple comparisons across the univariate ANOVAs?

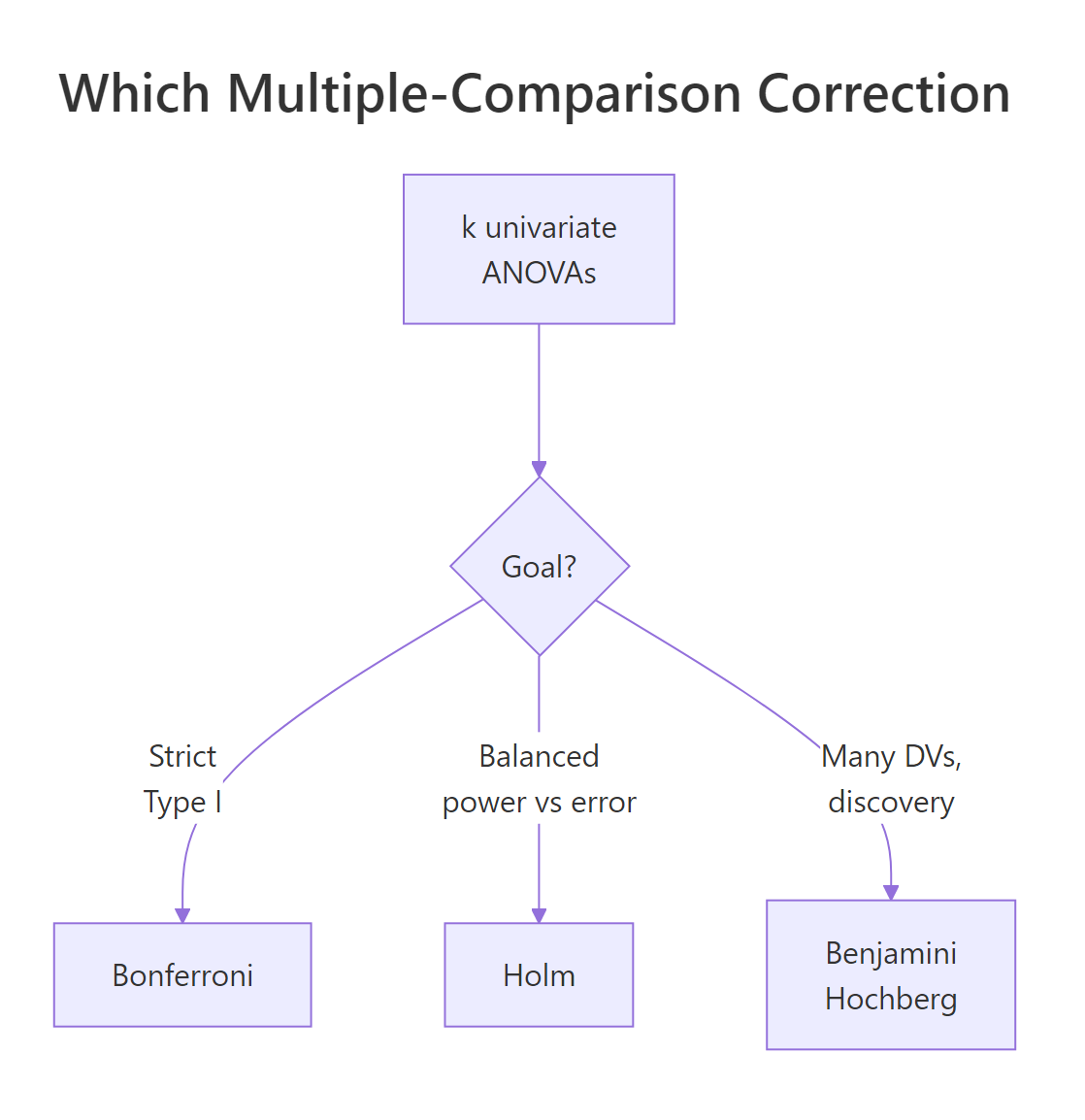

Each univariate ANOVA carries its own 5% false-positive risk. With four outcomes, the chance that at least one lights up by chance is roughly $1 - (1 - 0.05)^4 \approx 0.185$, almost four times the nominal rate. Three classical adjustments fix this. Bonferroni divides α by k, the strictest option. Holm orders the p-values smallest to largest and applies a stepwise threshold, more powerful than Bonferroni without losing strict Type I control. Benjamini-Hochberg (BH) controls the false discovery rate instead, which is appropriate when you have many outcomes and want to identify a set of likely signals.

Figure 1: Choosing a multiple-comparison correction across the univariate ANOVAs.

R bundles all three methods into p.adjust(). You feed it the raw p-vector and a method argument.

For the iris model the raw p-values are all effectively zero, so every correction keeps every outcome significant. The demo_pvals row uses fabricated borderline values to expose the differences. Bonferroni multiplies each p-value by k = 4, the harshest. Holm only multiplies the smallest by 4, the next by 3, and so on, retaining more power. BH applies the gentlest adjustment because it controls the false discovery rate rather than the family-wise rate. Pick one method before you look at the data and stick with it.

Try it: Apply the Holm correction to the same demo_pvals vector and save the result as ex_holm. Print it rounded to four decimals.

Click to reveal solution

Explanation: p.adjust() with method = "holm" orders the p-values, applies a stepwise multiplier (k, k-1, k-2, ...), and enforces monotonicity by clamping each adjusted value to be no smaller than the previous one. That is why Petal.Length and Petal.Width tie at 0.08.

How do you run pairwise group comparisons within each significant outcome?

A significant univariate ANOVA tells you "at least one group pair differs on this outcome," but not which pair. TukeyHSD() answers that by computing every pairwise mean difference and a confidence interval that controls the family-wise error rate across the contrasts within one outcome. You feed it a univariate aov() model, not the MANOVA object.

Every species pair differs significantly on petal length. The largest gap (4.09 cm) sits between virginica and setosa, the smallest (1.29 cm) between virginica and versicolor. To run the same comparison across every DV in one shot, wrap the call in lapply().

The Sepal.Width table shows two large negative gaps, setosa having the widest sepals, and a smaller positive gap between virginica and versicolor that is still significant at p = 0.009. The pattern is qualitatively different from petal length, which is why running pairwise comparisons per outcome is informative.

bartlett.test() rejects that assumption for your outcome, the Tukey p-values are unreliable. Switch to pairwise.t.test() with pool.sd = FALSE for a Welch-style fallback, or use the Games-Howell test from a local R session via the rstatix package.Sepal.Width is the only outcome that satisfies Bartlett's homogeneity assumption. For the other three, pairwise.t.test() with pool.sd = FALSE runs Welch-style two-sample tests and applies Holm. The conclusions match TukeyHSD here because the species are so well separated, but in marginal cases the Welch version is the safer reference.

Try it: Run TukeyHSD on Sepal.Width ~ Species and save the result as ex_tuk_sw. Print it and identify which species pair has the smallest absolute mean difference.

Click to reveal solution

Explanation: The virginica-versicolor row has the smallest absolute difference at 0.204 cm. It is still significant at p = 0.009, just much closer to the threshold than the other two pairs.

How do you measure and report MANOVA effect sizes?

Significance is not effect size. Two species can differ at p < 0.001 with 1500 observations even if the practical gap is tiny. Partial eta squared ($\eta^2_p$) gives you the proportion of variance in each outcome explained by the grouping factor. The formula is straightforward.

$$\eta^2_p = \frac{SS_\text{effect}}{SS_\text{effect} + SS_\text{error}}$$

Where:

- $SS_\text{effect}$ = the between-groups sum of squares for the species term in the per-DV ANOVA.

- $SS_\text{error}$ = the within-groups (residuals) sum of squares for the same DV.

- $\eta^2_p$ = a value between 0 and 1; conventional benchmarks are small (0.01), medium (0.06), and large (0.14).

summary.aov() already returns the sums of squares, so a one-line sapply() extracts them.

Petal.Length and Petal.Width both clear 0.92, meaning species explains over 92% of the variance in either petal measurement. Sepal width is much smaller at 0.40 (still a large effect by Cohen's benchmarks) and sepal length sits at 0.62. These numbers are the practical complement to the F-table: F says "this effect is reliable," $\eta^2_p$ says "this effect is huge."

Try it: Convert the partial eta squared for Petal.Length to Cohen's f using $f = \sqrt{\eta^2_p / (1 - \eta^2_p)}$. Save it as ex_cohen_f.

Click to reveal solution

Explanation: Cohen's f is a transformation of $\eta^2_p$ that grows without bound as the effect approaches 1. Petal length sits at $\eta^2_p = 0.941$, which translates to $f \approx 4$, far beyond Cohen's "large" cutoff of 0.40.

When should you use descriptive discriminant analysis instead?

Univariate follow-ups treat each outcome in isolation, which throws away the very correlation structure MANOVA used to find the effect in the first place. Descriptive discriminant analysis (DDA) does it differently. It finds linear combinations of the outcomes that maximally separate the groups, mirroring the multivariate question MANOVA actually asked. Methodologists like Smith, Lamb, and Henson (2020) argue DDA is the more theoretically appropriate post-hoc when DVs are correlated. R's MASS::lda() runs it in one line.

Read the scaling matrix column by column. LD1 (the first discriminant function) places heavy negative weights on Petal.Length and Petal.Width, and positive weights on the sepal measurements. The class centroids on LD1 spread from +7.6 (setosa) to -5.8 (virginica), so LD1 is the "petal versus sepal" axis that separates the species best. LD2 captures secondary variation, mostly in Sepal.Width versus Petal.Width, but the class centroids on LD2 sit much closer together.

Try it: Compute the predicted-class accuracy of lda_fit on the training data using predict(lda_fit)$class and table(). Save the confusion matrix as ex_conf.

Click to reveal solution

Explanation: setosa is perfectly classified, while versicolor and virginica swap a few flowers, the same pattern that shows up as the smaller group gap in the petal-length pairwise comparisons. DDA gives you a one-shot summary of how separable the groups really are.

Practice Exercises

Exercise 1: Full post-hoc on the airquality Month effect

Use the built-in airquality dataset. Drop incomplete rows with na.omit(). Fit a MANOVA with Ozone, Wind, Temp as joint outcomes and Month (coerced to factor) as the grouping factor. Run summary.aov(), apply Holm correction across the three p-values, identify the strongest outcome (largest F), and run TukeyHSD on it. Save the strongest-DV TukeyHSD object as aq_pairs.

Click to reveal solution

Explanation: Temperature has the largest F (around 41 from the per-DV table), so Tukey on Temp gives the most informative pairwise picture. July and August are statistically indistinguishable, but every other month-pair contrast either passes or sits very close to 0.05 after Tukey adjustment.

Exercise 2: A reusable post-hoc helper function

Write a function posthoc_manova(model, alpha = 0.05, method = "holm") that takes a fitted manova object and returns a data frame with one row per DV containing: F, raw_p, adjusted_p, partial_eta2, and a significant flag (TRUE if adjusted_p < alpha). Apply it to iris_man and save the result as posthoc_table.

Click to reveal solution

Explanation: The helper folds five operations, summary.aov walking, p extraction, p.adjust, eta squared, threshold check, into one reusable function. Now any new MANOVA can be summarised with one call. The Holm-adjusted p-values stay tiny because the iris-versus-species effect is enormous.

Complete Example

Putting the entire post-hoc workflow on iris end to end:

Plain-English write-up: All four floral measurements differ significantly across the three iris species after Holm correction (all adjusted p < 0.001). Petal length and petal width carry the strongest effects (partial η² = 0.94 and 0.93 respectively), and TukeyHSD on petal length confirms every species pair is distinguishable, with the largest gap between virginica and setosa (Δ = 4.09 cm). Descriptive discriminant analysis confirms the multivariate structure: LD1 is a petal-versus-sepal axis that places setosa far from the other two species. That is exactly the level of detail a peer-reviewed methods section expects.

Summary

Figure 2: The full post-hoc workflow from a significant MANOVA to a journal-ready conclusion.

| Step | R recipe |

|---|---|

| Per-outcome F-table | summary.aov(manova_object) |

| Extract raw p-values | sapply(aov_summary, function(tab) tab[["Pr(>F)"]][1]) |

| Multiple-comparison correction | p.adjust(p, method = "holm") (or "bonferroni", "BH") |

| Pairwise contrasts (equal var.) | TukeyHSD(aov(dv ~ group, data = ...)) |

| Pairwise contrasts (unequal var.) | pairwise.t.test(..., pool.sd = FALSE, p.adjust.method = "holm") |

| Variance-homogeneity check | bartlett.test(dv ~ group, data = ...) |

| Effect size per outcome | $\eta^2_p = SS_\text{effect} / (SS_\text{effect} + SS_\text{error})$ |

| Multivariate post-hoc view | MASS::lda(group ~ dv1 + dv2 + ...) |

| When to use Bonferroni | Few outcomes, confirmatory study |

| When to use Holm | Default for k ≤ 6, strict Type I control with more power than Bonferroni |

| When to use BH | Many outcomes, exploratory study, control FDR |

References

- Smith, K. N., Lamb, K. N., & Henson, R. K. (2020). Making Meaning out of MANOVA: The Need for Multivariate Post Hoc Testing in Gifted Education Research. Gifted Child Quarterly, 64(2), 96-115. Link

- Holm, S. (1979). A simple sequentially rejective multiple test procedure. Scandinavian Journal of Statistics, 6(2), 65-70.

- Benjamini, Y., & Hochberg, Y. (1995). Controlling the false discovery rate: a practical and powerful approach to multiple testing. Journal of the Royal Statistical Society Series B, 57(1), 289-300.

- R Core Team. *

?summary.aovand?TukeyHSDin base R.* Link - Tabachnick, B. G., & Fidell, L. S. (2019). Using Multivariate Statistics, 7th edition. Pearson. Chapter 7 on MANOVA.

- Penn State STAT 505. Lesson 8.5: Comparing Mean Vectors. Link

- Datanovia. One-Way MANOVA in R. Link

- Venables, W. N., & Ripley, B. D. (2002). Modern Applied Statistics with S, 4th edition. Springer. Chapter 12 on

MASS::ldaand discriminant analysis.

Continue Learning

- MANOVA in R, the parent tutorial that fits the multivariate model this article picks up from. Read it first if

manova()syntax or Pillai versus Wilks feels unfamiliar. - Post-Hoc Tests After ANOVA, deeper coverage of

TukeyHSD(), Bonferroni, and pairwise contrasts in the single-outcome setting that the per-DV step here reduces to. - One-Way ANOVA in R, the single-outcome companion that each

summary.aov()row corresponds to.