Functional Programming in R: The Mindset That Makes Your Code 10× Cleaner

Functional programming in R is the discipline of treating functions as ordinary values, you can store them, pass them around, and compose them, so repetitive loops collapse into single lines that say exactly what they do. Once the mindset clicks, most of your R code gets dramatically shorter, safer, and easier to read.

Why is functional programming a mindset, not a package?

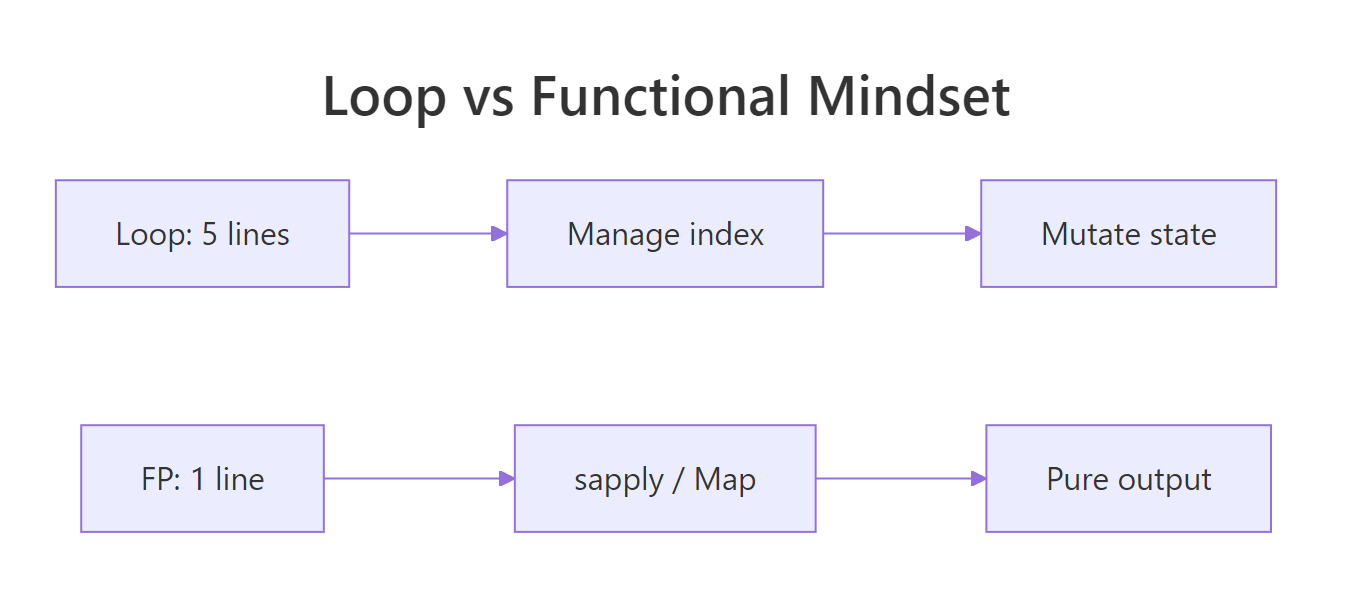

You've written the loop before: declare an empty vector, count indices, assign by position, try not to break the bookkeeping. It works, but most of the code is scaffolding, not meaning. Functional style flips that ratio. You describe the transformation once and hand it to R, the iteration disappears into a single call. Here is the same answer, both ways.

Both blocks produce the same vector. The loop spends four lines managing an index and a result buffer before it ever mentions squaring. The sapply call says, in one line, "apply this function to each element." That is the entire pitch of functional style: stop writing the bookkeeping, start writing the meaning.

sapply, Map, Filter, and Reduce ship with R itself. The popular purrr package is a modern, type-stable wrapper on the same ideas, we will see one line of it near the end so you can recognise the pattern later.Try it: Convert a vector of Celsius temperatures temps_c <- c(15, 22, 8, 30, 18) to Fahrenheit using sapply and the formula F = C * 9/5 + 32. Save the result to ex_temps_f.

Click to reveal solution

Explanation: sapply calls the anonymous function on every element of temps_c and simplifies the result to a numeric vector. The whole conversion is a one-liner because the "what" fits in one expression.

How are functions first-class objects in R?

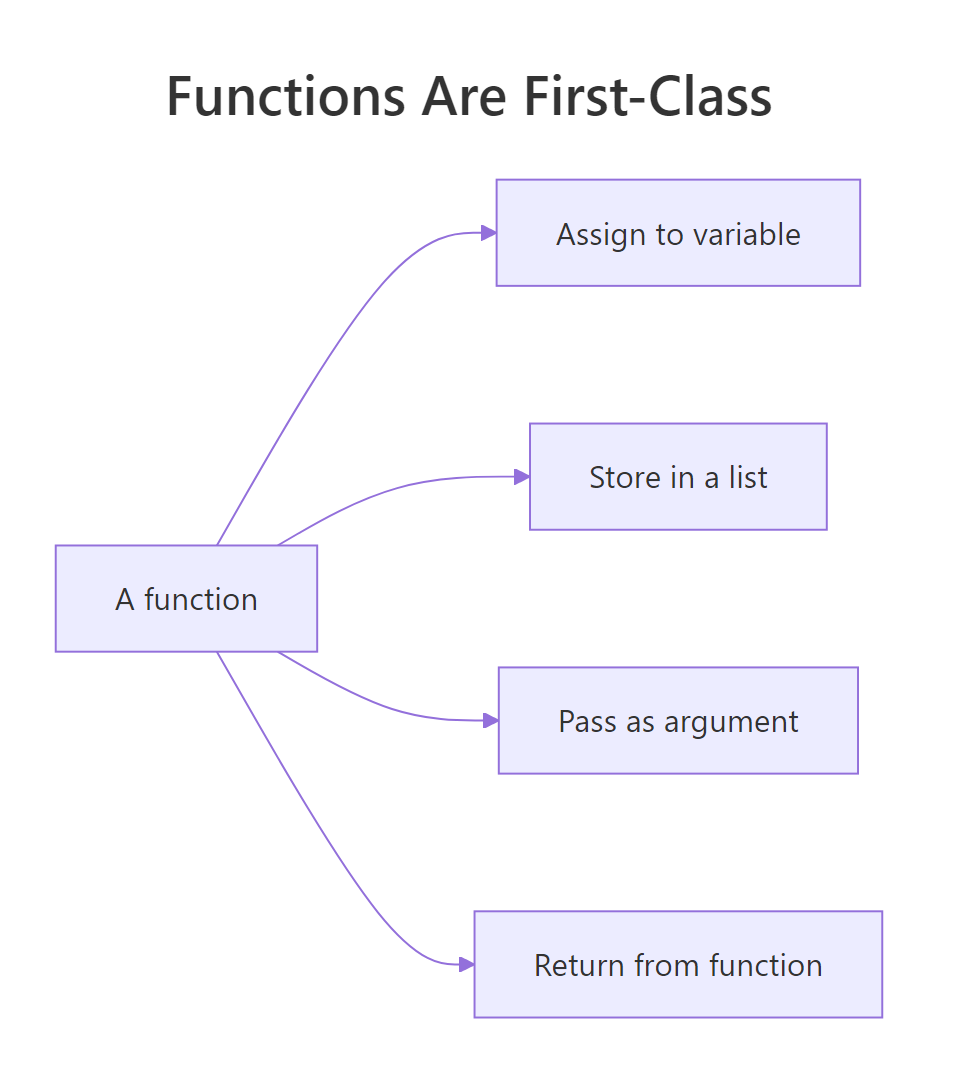

"First-class" is a claim about status: a value is first-class when you can do the same things to it that you can do to any other value. Integers are first-class, you can assign them, put them in a list, pass them to a function, or return them. In R, functions have exactly the same privileges. Seeing the proof of that is the fastest way to unlock the mindset.

double is now a name that refers to a function, exactly the way x <- 7 makes x refer to a number. The ops list holds three functions together, each retrievable with $. If this feels normal, good, that is the goal. Functions in R are ordinary values you can carry around in any container.

The other two privileges are "pass as an argument" and "return from another function." Here is a function that takes another function and applies it twice.

apply_twice is a higher-order function: a function whose argument (or return value) is itself a function. The first call hands over double, the second hands over an inline anonymous function. R treats them identically because, to R, they are just values of type function.

Figure 1: Four things you can do with a function that you can also do with any value.

function(x) x + 1 inline is shorter than defining a helper you will never reuse. R 4.1+ also supports the shorthand \(x) x + 1, which reads especially well inside sapply and Map.Try it: Write ex_apply_thrice(f, x) that applies f three times to x. Test it using \(x) x + 1 on 0, the answer should be 3.

Click to reveal solution

Explanation: The function composes f with itself three times. Starting from 0, each call adds one: 0 -> 1 -> 2 -> 3. This is a tiny taste of how you build behaviour by combining functions instead of writing more code.

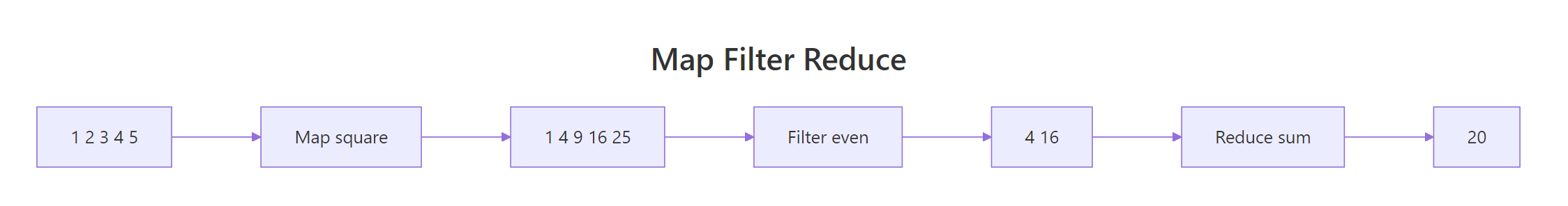

What are map, filter, and reduce, R's three core functionals?

Nearly every repetitive task on a collection fits one of three shapes. Map transforms each element, filter keeps elements that pass a test, and reduce collapses everything into a single value. R ships all three as base functions, Map(), Filter(), and Reduce(), plus the friendly vector-returning cousins sapply() and vapply(). Once you recognise the three shapes, you will spot them everywhere.

Read from top to bottom: Map returned a list (one squared value per input), sapply did the same job but gave back a plain numeric vector, Filter kept only the even values, and Reduce collapsed 1:6 to the sum 21. The accumulate = TRUE variant is a debugging gift, it shows you every intermediate value, so you can see why repeatedly adding 1:6 must end at 21.

Figure 2: How map, filter, and reduce transform a collection step by step.

sapply() or vapply() (stricter, type-checked), or wrap the Map() call in unlist(). Forgetting this is a top reason beginners think functional code "looks weird", they are getting lists where they expected numbers.Try it: Use Filter to keep only strings longer than 3 characters from words <- c("R", "cat", "tiger", "ox", "whale"). Save the result to ex_long_words.

Click to reveal solution

Explanation: nchar(w) > 3 is the predicate, a function that returns TRUE or FALSE for each element. Filter keeps only the inputs for which the predicate is TRUE. "tiger" (5) and "whale" (5) pass; the rest are dropped.

How do pure functions and closures power functional style?

Two ideas make functional code safe and reusable. A pure function depends only on its arguments and changes nothing else, given the same inputs, it always returns the same output. A closure is a function that remembers the environment it was created in, so it can carry a piece of state without using a global variable. Together they explain why functional R is easy to test and easy to compose.

add(2, 3) will return 5 forever, no matter how many times or in what order you call it, that is purity. impure_increment() returns a different number on every call because its answer depends on the hidden counter variable. Impure functions can be useful, but they make code harder to reason about: to predict the output, you need to know the history of every prior call. Functional style prefers purity because purity is what makes Map, Filter, and Reduce trustworthy, R can call them in any order without surprises.

A closure looks similar but uses the outer environment in a controlled, read-only way.

make_multiplier(3) returns a brand-new function whose body refers to factor. That factor lives in the environment make_multiplier was running in when it returned, and the returned function keeps a reference to it forever. times_three and times_ten are two different closures, each remembering its own factor. This is how you build specialised functions from a general recipe, no classes, no templates, just one line.

factor because it was born inside make_multiplier. This "memory" is what lets one recipe generate many specialised workers without repeating yourself.Try it: Write ex_make_greeter(greeting) that returns a function pasting greeting before a name. Use it to build a hi function and call it on "Selva".

Click to reveal solution

Explanation: ex_make_greeter returns an inner function that closes over greeting. Calling ex_make_greeter("Hi") creates a new closure where greeting is "Hi" forever, so hi("Selva") pastes "Hi" in front of any name you pass.

When should you reach for functional style instead of a loop?

Not every loop needs to be refactored. If the loop is tiny, imperative, and easier to read than the alternative, leave it alone, the goal is clarity, not ideological purity. But the moment a loop is describing a transformation (each → something), a filter (keep items where), or an accumulation (combine all into one), that loop is secretly a functional, and rewriting it usually shrinks the code by half.

Here is a concrete comparison on the built-in mtcars dataset, compute the mean of three columns.

Both versions return the same named numeric vector. The functional version is a single line because sapply already knows how to iterate over a list (a data frame is a list of columns) and collect results by name. The loop version needs four lines to reproduce that plumbing by hand. Read the sapply line aloud: "apply mean to each of these columns." That sentence is the code.

If you work in the tidyverse, the same idea is one line of purrr:

map_dbl is the type-stable sibling of sapply: it returns a double vector or it errors, it never silently gives you a list. That guarantee is the main reason purrr exists alongside base R's functionals.

sapply, lapply, Map, Filter, and Reduce are always available and teach the vocabulary. purrr::map_dbl, map_chr, map_lgl, and map_df add type-stable returns and pipe-friendly ergonomics when you want them.A practical decision list:

- Describing a transformation? Use

sapply,Map, orpurrr::map_*. - Keeping only some elements? Use

Filterorpurrr::keep. - Collapsing to one value? Use

Reduceorpurrr::reduce. - Need a running side-effect like printing, plotting, or writing files? Use

for, orpurrr::walkif you want the functional style, side effects are legal, they are just not the functionals' strength.

Try it: Replace the loop total <- 0; for (i in 1:100) total <- total + i with a one-line Reduce call. Save the result to ex_total.

Click to reveal solution

Explanation: Reduce applies + pairwise across the vector: 1 + 2 = 3, then 3 + 3 = 6, then 6 + 4 = 10, and so on. The final value is the classic Gauss sum 5050. One line replaces a three-line loop, and the intent reads right off the page.

Practice Exercises

These capstones combine two or more ideas from the tutorial. Each uses distinct variable names prefixed with my_ so you can experiment without overwriting tutorial state.

Exercise 1: Filter then map for word lengths

Given the list below, use Filter and sapply together to return a numeric vector of lengths of words that have at least 5 letters. Save the result to my_long_lengths.

Click to reveal solution

Explanation: Filter keeps "banana", "strawberry", and "watermelon". sapply(long_words, nchar) then measures each surviving word. This is the canonical filter-then-map shape and is how most real data pipelines begin.

Exercise 2: Build a power function with a factory

Write a function factory make_power(n) that returns a function raising its input to the nth power. Use it to create cube and then apply cube to 1:5. Save the resulting vector to my_cubes.

Click to reveal solution

Explanation: make_power(3) returns a closure that remembers n = 3. Every call to cube(x) computes x^3. You could also build square <- make_power(2) from the same factory, one recipe, many specialised workers.

Exercise 3: Running factorial with accumulate

Use Reduce with accumulate = TRUE to compute the running product of 1:6, these are the factorials 1!, 2!, 3!, up to 6!. Save the resulting vector to my_factorials.

Click to reveal solution

Explanation: Multiplication is the reducer. accumulate = TRUE captures each intermediate product: 1, then 1*2 = 2, then 2*3 = 6, then 6*4 = 24, and so on. Running totals and running products are the two most useful Reduce patterns in practice.

Complete Example

Let's put all five ideas to work on a tiny but realistic task: given a small inventory, compute the average price of items that are currently in stock. This example uses first-class functions (stored in a list-of-records), a filter, a map, and a reduce, the whole FP toolkit on three lines of data.

Each step has a single job and a single shape. You filter to drop out-of-stock items, map to extract the field you care about, and reduce to collapse many values into one. Every intermediate result is visible, so debugging is trivial, you check each pipe one at a time. Once the steps work, you can chain them into a single line for production:

That one-liner is the reward for learning the vocabulary. It reads right to left like a sentence: "take the mean of the prices of the in-stock items in inventory." Your future self will thank you for writing it this way.

Summary

Functional programming in R is a mindset before it is a toolkit. Once you accept that functions are ordinary values, the rest falls out naturally, map, filter, and reduce become the obvious way to describe repetitive work, pure functions become the obvious way to keep results predictable, and closures become the obvious way to build specialised workers from a single recipe.

Figure 3: The mental move from looping to functional style.

Five takeaways to keep:

- Functions are values. Assign them, store them in lists, pass them as arguments, return them from other functions.

- Three shapes cover most iteration. Transform with

Map/sapply, filter withFilter, collapse withReduce. Spot the shape, pick the functional. - Pure functions are easier to reason about. Same inputs, same output, no hidden state, that is the guarantee that makes composition safe.

- Closures carry state cleanly. A function returned from another function remembers the environment it was born in, use that to build specialised workers.

- Start with base R, graduate to purrr.

sapply,Map,Filter,Reduceteach the vocabulary.purrr::map_dbland friends add type safety when you need it.

References

- Wickham, H., Advanced R, 2nd Edition. Chapter 9: Functionals. Link

- Wickham, H., Advanced R, 2nd Edition. Chapter 6: Functions. Link

- Peng, R. D., Mastering Software Development in R, §2.3 Functional Programming. Link

- R Core Team,

Map,Filter,Reducereference (funprog). Link - purrr tidyverse package documentation. Link

- Rodrigues, B., Modern R with the tidyverse, Chapter 8: Functional Programming. Link

Continue Learning

- purrr map() Variants, the type-stable, pipe-friendly modern alternative to

sapply. - R Anonymous Functions, a deep dive into

function(x) ...and the R 4.1+ shorthand\(x) .... - Reduce, Filter, Map in R, a focused walkthrough of the base-R trio with more examples.

Further Reading

- Infix Functions in R: Write Your Own %op% Operators

- R Currying & Partial Application: purrr::partial() & rlang

- Functional Programming R Exercises: 18 Practice Problems

- Tail Call Optimization in R: Recursive Functions Without Stack Overflow

- furrr Package in R: Parallel purrr with future Backend

- purrr Exercises: 13 Functional Programming Practice Problems, Solved Step-by-Step

- apply() in R: Apply a Function Over Matrix Rows or Columns

- lapply() in R: Apply a Function to a List or Vector

- sapply() in R: Apply a Function and Simplify the Result

- purrr map() in R: Apply a Function (Tidyverse Style)

- purrr reduce() in R: Combine Elements With a Function

- Loops vs Vectorization Exercises in R: 20 Practice Problems

- purrr imap() in R: Map Over Values and Their Names

- purrr map2() in R: Iterate Over Two Lists in Parallel

- purrr map_dbl() in R: Return a Numeric Vector

- purrr map_int() in R: Return an Integer Vector

- purrr pmap() in R: Iterate Over Many Lists in Parallel

- purrr iwalk() in R: Walk a List With Its Names

- purrr map_chr() in R: Map a List to a Character Vector

- purrr map_df() in R: Build a Data Frame From a List

- purrr map_dfr() in R: Map and Row-Bind to Data Frames

- purrr map_lgl() in R: Map a List to a Logical Vector

- purrr map_vec() in R: Map to a Simple Typed Vector

- purrr modify() in R: Transform Elements, Keep the Type

- purrr pwalk() in R: Side Effects Over Parallel Lists

- purrr walk() in R: Apply Functions for Side Effects

- purrr walk2() in R: Side Effects Over Two Inputs

- purrr accumulate() in R: Running Reductions Over a List

- purrr accumulate2() in R: Cumulative Reduce Over Two Vectors

- purrr cross() in R: Generate All List Combinations

- purrr cross2() in R: All Pairs of Two Lists

- purrr cross_df() in R: Make a Tibble of Combinations

- purrr list_modify() in R: Update List Elements by Name

- purrr modify_at() in R: Transform Elements by Name

- purrr modify_if() in R: Conditionally Transform Elements

- purrr reduce2() in R: Fold Two Lists in Parallel

- purrr reduce_right() in R: Fold a List From the Right

- purrr compact() in R: Drop NULL and Empty List Elements

- purrr detect() in R: Find the First Matching Element

- purrr detect_index() in R: Find First Match Position

- purrr discard() in R: Drop List Elements by Predicate

- purrr every() in R: Test If All Elements Pass

- purrr has_element() in R: Test If a List Contains a Value

- purrr keep() in R: Filter List Elements by Predicate

- purrr none() in R: Test If No Element Matches

- purrr pluck() in R: Extract Nested List Elements

- purrr some() in R: Test If Any Element Matches

- purrr compose() in R: Combine Functions Into One

- purrr flatten() in R: Flatten Nested Lists

- purrr insistently() in R: Retry a Call Until It Succeeds

- purrr lift() in R: Change Function Argument Style

- purrr negate() in R: Flip a Predicate's Logic

- purrr partial() in R: Pre-Fill Function Arguments

- purrr possibly() in R: Return a Default on Error

- purrr quietly() in R: Trap Output, Warnings, Messages

- purrr safely() in R: Catch Errors Without Stopping Code

- purrr transpose() in R: Restructure Nested Lists by Index

- purrr list_rbind() in R: Row-Bind a List of Data Frames

- purrr set_names() in R: Name Vector and List Elements

- purrr map_dfc() in R: Combine List Results Into Columns

- mapply() in R: Apply a Function Over Multiple Arguments

- vapply() in R: Type-Safe Apply With Guaranteed Output

- Filter() in R: Keep List Elements That Match a Predicate

- base Find() in R: Return First Element Matching a Predicate

- base Map() in R: Zip Lists and Apply a Function in Parallel

- base Position() in R: Find Index of First Match

- Recall() in R: Recursion Without the Function Name

- Reduce() in R: Fold a Function Over a List or Vector

- rapply() in R: Recursively Apply Functions to Nested Lists

- tapply() in R: Apply a Function by Grouping Factor