Binomial Distribution Exercises in R: 10 Practice Problems Solved

These 10 binomial distribution exercises in R walk you from dbinom() one-liners to binom.test() inference, with full runnable solutions. Every problem has a scaffold, a hint, and a reveal so you can check your answer the moment you finish coding.

What quick R one-liners solve binomial problems?

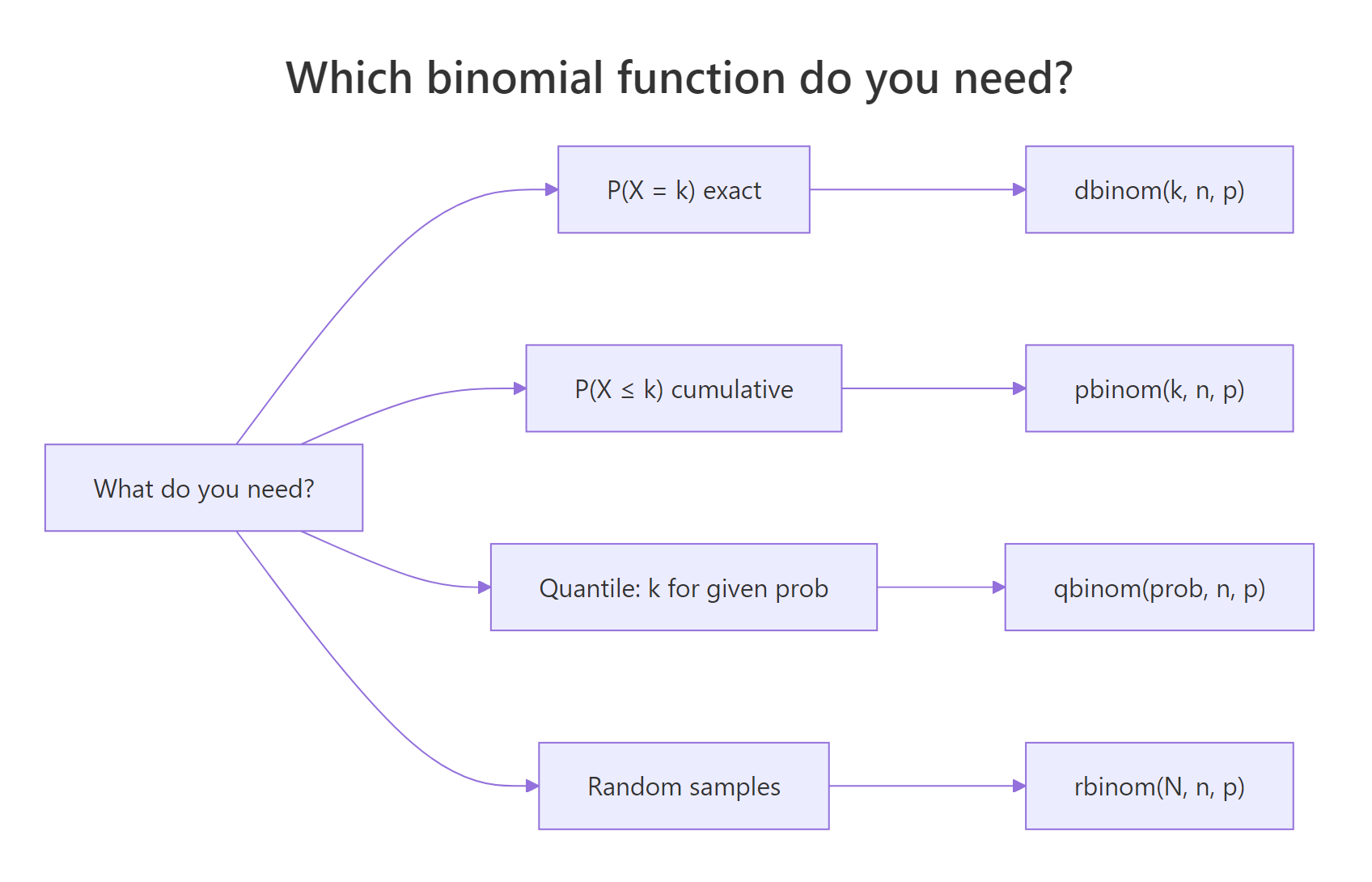

The binomial distribution answers one question: given n trials with success probability p, how likely is each count of successes? R gives you four functions, dbinom(), pbinom(), qbinom(), rbinom(), that cover exact probability, cumulative probability, quantiles, and random samples. Before the 10 problems, a single runnable block shows the two most common cases side by side so you can spot which function fits a new question at a glance.

The first call asks "what is the probability of landing on exactly 5 heads?" and the second asks "what is the probability of 5 or fewer?" Both share the same parameters, size = 10 trials, prob = 0.5 success rate, and differ only in the function name. That two-function pairing handles most questions you will meet.

Figure 1: The four binomial functions, pick one by what the question asks for.

d for density/mass, p for cumulative, q for quantile, r for random, works for every distribution in base R (dnorm, ppois, qexp, rt). Learn it once for binomial, reuse it forever.Try it: Compute the probability of exactly 3 successes in 8 trials with success probability 0.4.

Click to reveal solution

Explanation: dbinom(k, n, p) returns P(X = k). Here X is the number of successes in 8 independent trials with p = 0.4, and k = 3.

How do you compute exact binomial probabilities with dbinom()?

dbinom(k, size = n, prob = p) returns the probability mass at a single count. The formula under the hood is the classic binomial PMF:

$$P(X = k) = \binom{n}{k} p^k (1-p)^{n-k}$$

Where:

- $n$ = number of trials

- $k$ = count of successes you ask about

- $p$ = probability of success on each trial

- $\binom{n}{k}$ = the number of ways to choose which trials succeed

For a single k, a single dbinom() call is enough. For a range of counts, say "between 3 and 5 successes", pass a vector of k values and sum the result.

p_seven tells you that landing on exactly 7 heads happens about 12% of the time, not rare, but not the modal outcome either. The range call sums the PMF at k = 3, 4, 5. Passing the vector 3:5 vectorizes automatically, and sum() collapses the three probabilities into one number (roughly 0.57).

sum(dbinom(3:5, 10, 0.5)) is faster and more readable than three separate calls, and it scales cleanly when the range gets wider (e.g., sum(dbinom(30:60, 100, 0.5))).Try it: Find the probability of exactly 4 successes in 12 trials with success probability 0.3.

Click to reveal solution

Explanation: Same function, new parameters. The peak of this PMF sits near np = 3.6, so k = 4 is close to the mode and picks up a large share of the mass.

How do you compute cumulative probabilities with pbinom()?

pbinom(q, size = n, prob = p) returns $P(X \le q)$, the cumulative probability up to and including q. "At least" questions flip the direction, and there are two equivalent ways to compute them: the explicit complement 1 - pbinom(...) or the lower.tail = FALSE argument.

p_leq3 says that in 10 trials with p = 0.3, three or fewer successes happen about 65% of the time. The two "at least 7" calls return the same ~1% probability: 1 - pbinom(6, ...) subtracts everything up to 6 from 1, while lower.tail = FALSE does the same arithmetic internally. Use whichever reads better in your code; the lower.tail form avoids a potential rounding issue when the tail is extremely small.

pbinom(7, ...) when you really want "strictly less than 7", the correct call is pbinom(6, ...). Always ask yourself whether the boundary is included before picking q.Try it: For n = 5 and p = 0.2, find the probability of at least 2 successes.

Click to reveal solution

Explanation: "At least 2" means X ≥ 2, which is the complement of X ≤ 1. Subtract pbinom(1, ...) from 1, or use lower.tail = FALSE to get the upper tail directly.

How do you find quantiles and simulate with qbinom() and rbinom()?

qbinom() inverts pbinom(): given a cumulative probability, it returns the smallest count whose cumulative probability reaches that threshold. rbinom() draws random binomial counts, useful for simulation, Monte Carlo checks, and bootstrapping. The first argument of rbinom() is the number of random draws to make, not the trial count; the trial count is size.

q95 = 48 tells you that in 95% of experiments you should see 48 or fewer successes, so 48 is a reasonable "worst-case-except-for-5%" threshold. The rbinom() call generates 5 independent samples from Binomial(100, 0.4); each number is one simulated experiment's success count, and they cluster around the mean $np = 40$. set.seed() ensures your results match when you rerun the code.

rbinom(5, 100, 0.4) means "give me 5 independent counts, each from Binomial(100, 0.4)", not "5 trials with 100 draws each." Swapping the two is the most common bug with this function.Try it: Simulate 10 draws from Binomial(20, 0.6) with set.seed(7) and compute the mean of the draws.

Click to reveal solution

Explanation: With only 10 draws, the sample mean (12.7) wanders a bit around the theoretical mean np = 12. Increase the number of draws to tighten the estimate, try 10,000 and compare.

Practice Exercises

Ten problems, progressively harder. Each one gives the setup, a starter block with a hint, and a hidden solution you can reveal after you try. Variables are prefixed ans or named by exercise so they do not clash with tutorial state.

Exercise 1: Exactly k heads in a fair coin toss

A fair coin is tossed 6 times. What is the probability of exactly 4 heads? Save the answer to ans1.

Click to reveal solution

Explanation: P(X = 4) for Binomial(6, 0.5). Four heads in six tosses is close to the mode (3), so the probability is a healthy ~23%.

Exercise 2: At most 2 defects in a batch

A factory produces parts with a 15% defect rate. In a batch of 20 parts, what is the probability of at most 2 defects? Save to ans2.

Click to reveal solution

Explanation: P(X ≤ 2) in Binomial(20, 0.15). About 40% of batches pass a "2 defects or fewer" gate.

Exercise 3: At least 3 correct by guessing

A quiz has 10 multiple-choice questions, each with 4 options. What is the probability of getting at least 3 correct by guessing randomly? Save to ans3.

Click to reveal solution

Explanation: With p = 0.25 and 10 questions, random guessing beats the "3 correct" bar almost half the time. The expected score is np = 2.5, which sits right on the boundary.

Exercise 4: Between 6 and 9 "yes" responses

In a survey of 12 people, each person says "yes" with probability 0.6. What is the probability of between 6 and 9 "yes" responses, inclusive? Save to ans4.

Click to reveal solution

Explanation: Passing 6:9 to dbinom() returns a length-4 vector of PMF values; sum() adds them. The bulk of the mass in Binomial(12, 0.6) lives in this range because np = 7.2.

Exercise 5: Inventory stocking to cover 99% of demand

A store sees 200 customers per day, and each buys a niche product with probability 0.05. What is the smallest stock level that covers at least 99% of demand? Save to ans5.

Click to reveal solution

Explanation: qbinom(0.99, ...) returns 18, the smallest stock level whose cumulative probability first reaches 99%. Stocking 17 would only cover ~98.4%, so qbinom() gives you the right safety margin with a single call.

Exercise 6: Simulate dice rolls and compare with theory

Simulate 10,000 experiments of rolling a fair die 50 times, counting rolls that show a 6 (so p = 1/6). Use set.seed(42). Report the simulated mean and standard deviation, and compare with theoretical values $np$ and $\sqrt{np(1-p)}$. Save the vector to sim6.

Click to reveal solution

Explanation: Ten thousand draws get the sample mean within 0.02 of $np$ and the sample SD within 0.003 of $\sqrt{np(1-p)}$. This is why Monte Carlo checks are a reliable sanity test for any binomial calculation, the simulation converges fast.

Exercise 7: Mean and variance of a clinical trial response count

In a clinical trial, n = 30 patients each respond to treatment with probability 0.7. Compute the theoretical mean $E[X] = np$ and variance $Var[X] = np(1-p)$. Then draw 10,000 samples with set.seed(11) and compare.

Click to reveal solution

Explanation: Theory gives mean = 21 and variance = 6.3. The 10k simulated sample reproduces both to two decimal places. For any Binomial(n, p), the mean is always $np$ and the variance is always $np(1-p)$, these two formulas are worth memorizing.

Exercise 8: Plot the PMF with a barplot

Plot the probability mass function of Binomial(n = 25, p = 0.4) over the counts 0 through 25. Use barplot(). Save the PMF vector to pmf8.

Click to reveal solution

Explanation: The mode sits at k = 10 (close to np = 10). barplot() accepts the PMF vector directly; names.arg maps each bar to its k value. A quick which.max() - 1 converts the 1-indexed vector position to the 0-indexed count.

Exercise 9: A/B test with binom.test

An A/B test logs 58 conversions in 100 visits. Test the null hypothesis that the true conversion rate is 0.5 against the two-sided alternative, and report the p-value and 95% confidence interval. Save the test object to test9.

Click to reveal solution

Explanation: The p-value of 0.133 sits above the usual 0.05 threshold, so 58/100 is consistent with a true rate of 0.5. The 95% CI of [0.477, 0.678] also contains 0.5, both signals agree that the observed lift is not statistically significant at this sample size.

Exercise 10: Airline overbooking risk

An airline sells 110 tickets for a plane with 100 seats. Each passenger shows up independently with probability 0.9. What is the probability more than 100 passengers show up (forcing someone off)? Then compute the same probability if the airline sells 115 tickets instead. Save both to ans10a and ans10b.

Click to reveal solution

Explanation: At 110 tickets, the airline faces a ~35% chance of having to bump passengers, already uncomfortable. Selling 115 tickets pushes that risk to ~80%, which almost guarantees a daily overbooking incident. Use qbinom() to pick a safer ticket count for a target risk level.

Complete Example: End-to-End Quality Control Workflow

A factory inspects batches of 500 widgets. Historically, 2% of widgets are defective. Walk through the four binomial functions on one coherent dataset: expected defects, tail probability, 95th percentile, simulation, and hypothesis test.

The batch averages 10 defects with SD ~3.1, so 14 defects is one-plus standard deviation above the mean, noticeable but not alarming. pbinom() and the simulated tail both give about 5% for P(defects > 15); the simulation (4.94%) matches the analytic value (4.91%) within Monte Carlo error. qbinom() pins the 95th percentile at 15 defects. The one-sided binom.test() returns p = 0.139, confirming that 14/500 is not strong evidence of an elevated defect rate. This is the full analyst loop, point estimate, tail risk, quantile, simulation, formal test, in a dozen lines of R.

Summary

| Question asked | Function | Syntax |

|---|---|---|

| P(X = k) exact | dbinom() |

dbinom(k, size = n, prob = p) |

| P(X ≤ q) cumulative | pbinom() |

pbinom(q, size = n, prob = p) |

| P(X ≥ q) upper tail | pbinom() with lower.tail = FALSE |

pbinom(q-1, n, p, lower.tail = FALSE) |

| Smallest k for P(X ≤ k) ≥ α | qbinom() |

qbinom(alpha, size = n, prob = p) |

| Random counts | rbinom() |

rbinom(N_draws, size = n, prob = p) |

| Range sum | vectorize dbinom | sum(dbinom(a:b, n, p)) |

| Hypothesis test | binom.test() |

binom.test(x, n, p = p0) |

The mean of Binomial(n, p) is always $np$ and the variance is always $np(1-p)$. The four functions share one prefix pattern (d/p/q/r) with every other distribution in base R, so the muscle memory you build here transfers to dnorm, ppois, qexp, and beyond.

References

- R Core Team,

?Binomialreference manual. Link - Wickham, H. & Grolemund, G., R for Data Science, 2nd ed. Link

- Dalgaard, P., Introductory Statistics with R, 2nd ed. Springer (2008).

- Donovan, T., Coggins, L. & Hines, J., Binomial Distribution in R. UVM (2020). Link

- CRAN Task View: Distributions. Link

- Rice, J. A., Mathematical Statistics and Data Analysis, 3rd ed. Duxbury (2006).

- Diez, D., Çetinkaya-Rundel, M. & Barr, C., OpenIntro Statistics, 4th ed. Link

- Butler, G., Tutorial 4: The Binomial Distribution, ECON 41 Labs. Link

Continue Learning

- Binomial vs Poisson in R: Understand When Each Distribution Fits Your Counts, the core tutorial these exercises extend.

- Normal Distribution in R, the continuous cousin, also the large-n approximation for the binomial.

- Probability Distributions in R, the bigger picture of the d/p/q/r family across distributions.