Grid Approximation in R: Compute Bayesian Posteriors Without MCMC

Suppose you ran a small website test: 9 visitors saw a new button, 6 of them clicked. You want to know the true click-through rate. Grid approximation gives you the answer as a full probability curve over plausible rates, not just a single number, and it works in 5 lines of base R, no MCMC, no closed-form math, no extra packages.

What is the simplest way to do Bayesian inference in R?

You ran the test, you got 6 clicks out of 9 visitors. The plain answer "6/9 = 0.67" gives you one number, but it doesn't tell you how confident to be in that number. Maybe the true rate is 0.5, you just got lucky with the 9 visitors. Maybe it's 0.85 and you got slightly unlucky. With only 9 visitors, both are possible.

What you really want is a curve: for every possible click-through rate between 0 and 1, how plausible is it given the 6-out-of-9 result? That curve is called the posterior, and there's a way to build it that needs no calculus and no special package. Lay down a fine grid of candidate rates, score each one by how well it would have produced your data, normalize the scores into probabilities, done.

The most likely click-through rate is around 64%, and there is a 95% chance it lies somewhere between 38% and 85%. That huge range, 38% to 85%, is the honest answer when you have only 9 visitors. A simple "6/9 = 67%" hides the uncertainty completely.

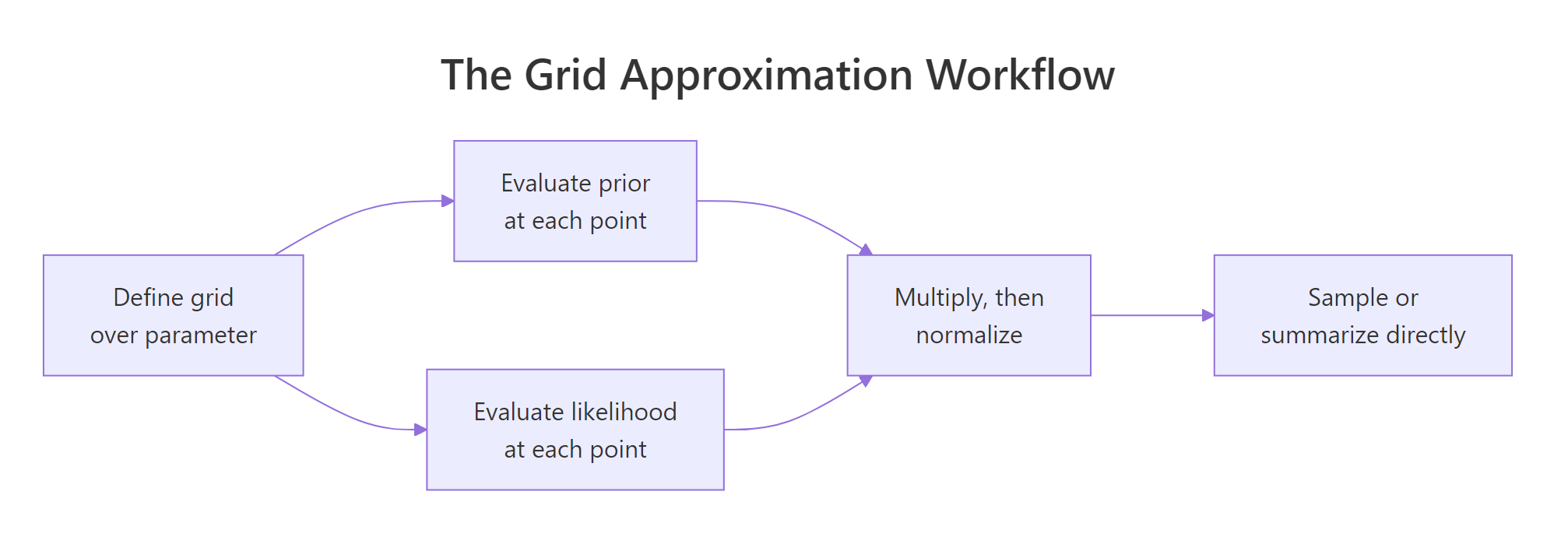

Figure 1: The grid approximation workflow. Discretize, multiply, normalize, summarize.

seq, dbinom, dnorm, etc.) do everything else. Once you have the table of scores, every Bayesian summary, the most likely value, the 95% range, the probability of any event, is just a weighted average over that table.A tiny piece of vocabulary now that you've seen it work. The prior is what you believed about the rate before running the test; we used a flat curve that says "any rate is equally plausible." The likelihood is how well each candidate rate explains the actual data you saw. The posterior is what you should believe after combining the two. That trio (prior, likelihood, posterior) is the entire framework.

Try it: Re-run the calculation with a bigger sample: 12 clicks out of 20 visitors. Does the 95% range tighten?

Click to reveal solution

The most likely rate dropped slightly to 0.60 (matching 12/20 = 0.60), and the 95% range tightened from [0.38, 0.85] to [0.39, 0.79]. More data sharpens the answer, exactly as it should.

How fine should the grid be?

The grid is your resolution: how many candidate rates you score. Too few and the posterior is jagged and inaccurate. Too many and you waste compute. There is a sweet spot, and the way to find it is to keep doubling the grid until the answers stop changing.

The check is concrete. Compute the posterior with 11 grid points, then 101, then 1001, and look at the 95% range each time. When two consecutive runs give nearly the same range, you have enough resolution.

At 11 grid points the range is rough (multiples of 0.1, since that's all the resolution you have). By 101 points the answer has stabilized to two decimals. Going to 1001 changes essentially nothing. For a one-parameter problem on [0, 1], somewhere around 100 to 200 grid points is the comfortable default.

Try it: Find the smallest grid size where the 95% range stops changing meaningfully (less than 0.01 width change) for the original 6-of-9 data.

Click to reveal solution

Going from 25 to 50 changes the range noticeably; from 100 to 200 the change is under 0.01. So 100 points is enough here.

Does grid approximation give the right answer?

For some problems there is also a textbook formula that gives the exact answer in one line. The proportion problem (6 of 9 clicks, flat prior) is one of them. The textbook answer follows what's called the Beta-Binomial pattern, and R's qbeta() function computes its 95% range directly. Comparing grid output to qbeta() is the simplest sanity check that grid approximation is doing the right thing.

The two ranges agree to within a couple of percentage points. The small gap comes from the random sampling step; with more samples or a finer grid the agreement gets tighter. The point is that grid approximation is not a black box, you can verify it against a known answer whenever one exists.

Try it: A different problem with a textbook formula: counts per day. Suppose your customer-support team handled 4, 7, 6, 5, and 8 tickets over five days, and you assume a flat prior on the daily rate. The textbook says the posterior is Gamma(sum + 1, n) where sum = 30 and n = 5. Verify the 95% range with qgamma(), then check by grid approximation.

Click to reveal solution

Textbook says [4.21, 8.47]. Grid says [4.22, 8.48]. Match. Notice the solution used log = TRUE and then exp(... - max(...)), that's the trick from the next section.

What if the data is too big and the math underflows?

Try the same likelihood calculation with 200 visitors instead of 9, and something annoying happens: the numbers get so small that R rounds them all to zero. The likelihood at every grid point becomes 0, the posterior has 0 sum, and the whole thing breaks.

The sum is exactly zero. Each individual dbinom(...) is between 0 and 1, and multiplying 200 of them together produces a number so small that 64-bit floating-point rounds it to zero. The fix is to add logs instead of multiplying. In log space the numbers are negative and well-behaved, even with thousands of observations.

The posterior mean lands at 0.665, which is exactly what you would expect for 200 observations at a true rate of 0.65. The log trick recovers correct answers from data sizes that the naive version cannot handle at all.

log = TRUE inside dbinom, dnorm, dpois, etc., and always subtract the max before exp(). It costs nothing on small datasets and saves you on big ones.Try it: Adapt the log-scale pattern to the tickets example with 100 days of simulated counts.

Click to reveal solution

Posterior mean is 6.02 against a true rate of 6.0. Even with 100 observations the log-scale version is rock-solid; the naive product would have collapsed to zero a long time ago.

How do I get a probability of a specific event?

Sometimes the question is not "what's the rate?" but "what's the chance the rate is above 50%?" or "what's the chance variant B beats variant A?" Direct integration on the grid would work, but a simpler trick is to draw thousands of samples from the posterior and answer with arithmetic on the samples.

sample() already accepts a prob = argument. Hand it the grid as the choices and the posterior as the weights, and you get draws that follow the posterior. Then any question about the rate becomes a question about the proportion of draws that satisfy it.

There is roughly an 82% chance the true click-through rate is above 50%, and about a 41% chance it sits between 50% and 70%. Those numbers are the kind a stakeholder can act on directly: they say "we are 82% sure the new button is better than a coin flip."

P(rate_B > rate_A), sample 10,000 draws from each posterior and compute mean(b > a). Trying to do that with direct integration is painful; with samples it is one line.Try it: What is the posterior probability that the click-through rate is above 70%?

Click to reveal solution

About 39%. Combined with the 82% chance the rate is above 50%, you can quote either one to whoever is asking.

When does grid approximation stop working?

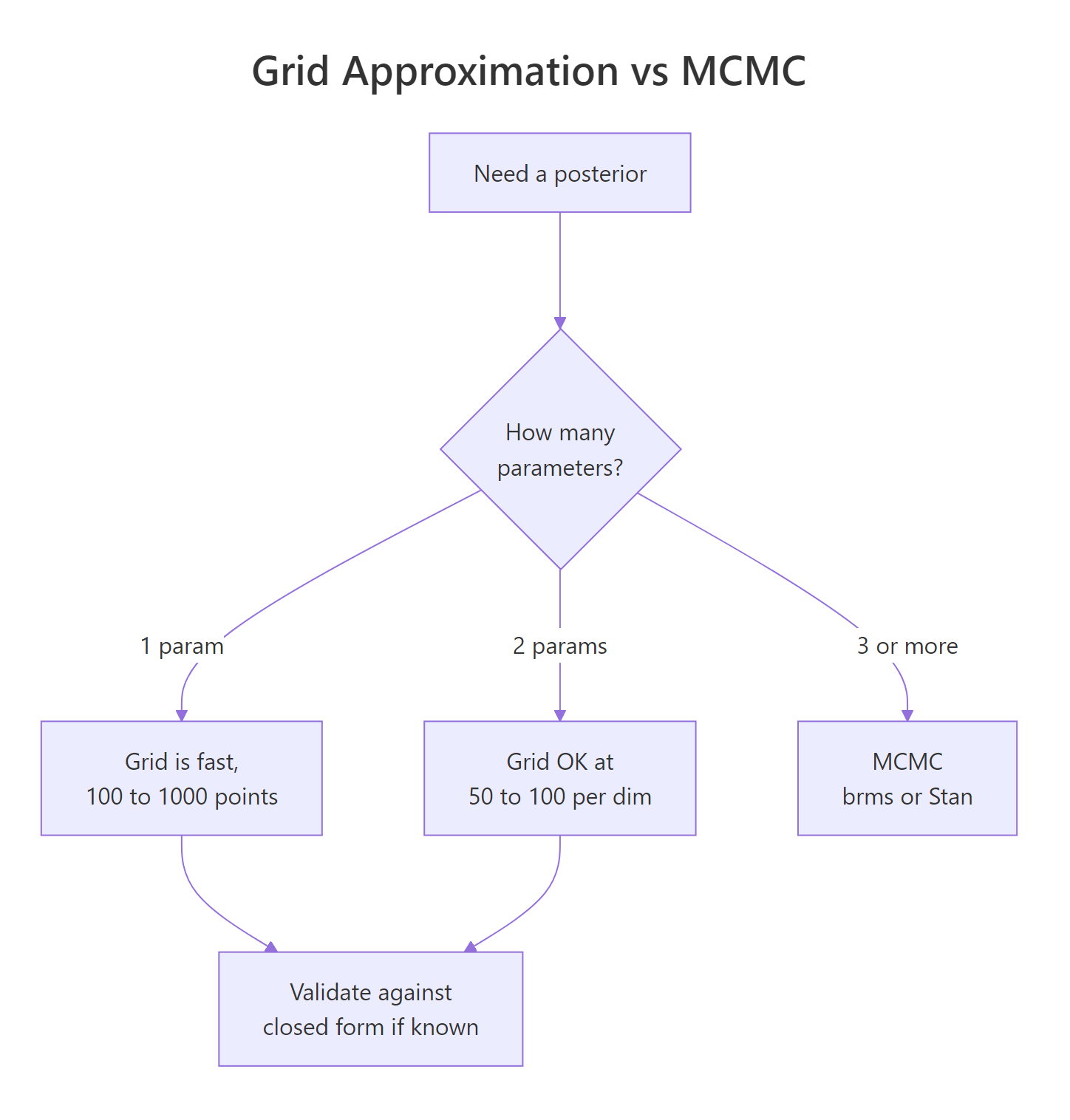

The arithmetic of grid approximation is the same regardless of how many parameters you have, but the cost grows steeply. With one parameter and 100 grid points, you score 100 candidate values. With two parameters at 100 points each, you score 100 × 100 = 10,000. With five parameters at 50 points each, you score 50^5 ≈ 312 million. Beyond about three parameters, even fast computers run out of memory or patience.

A two-parameter case is fine. Here's a Normal model where both the mean and the standard deviation are unknown, fit by grid:

80 × 80 = 6,400 cells, runs in well under a second, posterior mean for the unknown true mean comes out to 5.12 (close to the true value of 5). Add a third parameter at the same resolution and you'd be at 512,000 cells. A fourth and you'd be in the millions. Real Bayesian models often have dozens of parameters; that's the wall grid approximation hits.

Figure 2: When grid wins and when MCMC takes over. The decision tree by parameter count.

brms and rstan sample from posteriors instead of laying down a grid. The mental model you built here, prior plus likelihood gives a posterior, transfers exactly. MCMC just produces samples instead of a normalized table, and the same summary functions (mean, quantile, mean(samples > x)) work on those samples too.Try it: How many cells does grid approximation need for 5 parameters at 50 points each? Compare that to the 6,400 cells of the 2-parameter case.

Click to reveal solution

312 million cells, each requiring a likelihood evaluation. At 100 microseconds per evaluation that's about nine hours just for the grid scoring step. This is exactly the wall MCMC was invented to scale past.

Practice Exercises

Exercise 1: A click-through rate from a smaller sample

A new ad got 8 clicks from 50 impressions. Use a flat prior on the click-through rate, build the posterior by grid approximation, and report the posterior mean, 95% range, and the probability that the true rate exceeds 5%. Verify the 95% range against qbeta().

Click to reveal solution

The grid 95% range and the textbook qbeta() range agree to within ~0.01. There's better than a 99% chance the true rate exceeds 5%.

Exercise 2: Two parameters at once, Normal mean and SD

Five IQ measurements were 130, 125, 142, 118, 134. Both the underlying mean and standard deviation are unknown. Use a two-parameter grid on mu over [100, 160] and sigma over [1, 30], 60 points per dimension, with a flat prior on both. Report the posterior mean for mu and the posterior probability that mu > 130.

Click to reveal solution

Posterior mean of mu is 129.7. The data mean is 129.8, so the flat prior plus 5 observations gives essentially the data mean. Posterior probability that mu exceeds 130 is about 46%, basically a coin flip given how few measurements you have.

Exercise 3: A sequential-update sanity check

Bayes is order-independent: updating once with a combined dataset should give the same posterior as updating one observation at a time. Verify this for a small Bernoulli example. Start from a flat prior, update with the first three flips of c(1, 1, 0) one at a time (using each posterior as the next prior), then compare to a single update with all three at once.

Click to reveal solution

Identical to the last decimal. Sequential and one-shot updates give the same posterior, which is exactly the order-independence property of Bayes' theorem.

Complete Example: A/B Test by Grid Approximation

Marketing tested two button colors. Variant A: 84 clicks from 1,200 impressions. Variant B: 105 clicks from 1,180 impressions. Question: what's the probability that B's true click-through rate is higher than A's?

A's most likely rate is 7.1%. B's is 9.0%. There is a 97% probability that B's true rate is higher than A's, which is the kind of clean answer you can hand to a stakeholder without translating from p-values. Behind the scenes, this entire calculation took six lines of base R and one grid.

Summary

| Step | What you do | R idiom |

|---|---|---|

| Define the grid | A fine sequence over the parameter range | seq(low, high, length.out = N) |

| Evaluate the prior | A density value at each grid point | dbeta, dgamma, dnorm, etc. |

| Evaluate the likelihood | Use log = TRUE for safety on big data |

dbinom(..., log = TRUE) |

| Combine and rescale | Subtract max, exp, normalize | exp(log_post - max(log_post)) |

| Summarize | Weighted mean, weighted quantiles | weighted.mean(), quantile(samples, ...) |

| Sample for derived quantities | Draws from the grid weighted by posterior | sample(grid, N, replace = TRUE, prob = post) |

Grid approximation works whenever you have one or two parameters. Beyond that, MCMC tools like brms and rstan take over, but the mental model you built here, prior plus likelihood becomes posterior, is exactly what those tools do under the hood.

References

- McElreath, R. Statistical Rethinking, 2nd ed. Chapman & Hall, 2020. Chapter 2 introduces grid approximation with the globe-tossing example. xcelab.net/rm/statistical-rethinking.

- Johnson, A. A., Ott, M. Q., Dogucu, M. Bayes Rules!, Chapman & Hall, 2022. Chapter 6 covers grid approximation in R, with closed-form verification. bayesrulesbook.com/chapter-6.

- Pruim, R. (Re)Doing Bayesian Data Analysis, Chapter 5. The grid method on a log scale, with discrete-parameter examples. rpruim.github.io/Kruschke-Notes.

- Gelman, A. et al. Bayesian Data Analysis, 3rd ed. Chapman & Hall, 2013. Chapter 10 covers approximate inference methods broadly.

- R Core Team.

sample()documentation: weighted sampling via theprob =argument is the foundation of grid posterior summaries. stat.ethz.ch/R-manual. - CRAN Task View: Bayesian Inference. cran.r-project.org/web/views/Bayesian.html. Curated list of Bayesian R packages.

Continue Learning

- Bayesian Statistics in R, the section opener that builds the prior-likelihood-posterior intuition with simpler examples.

- Conjugate Priors in R, the closed-form shortcuts that work whenever the prior and likelihood pair up nicely (and that grid approximation can verify against).

- Bayes' Theorem in R, the discrete foundation that motivates everything in this post.