Eigenvalues & Eigenvectors in R: eigen(), Why They Power PCA & More

An eigenvector of a square matrix A is a non-zero vector whose direction is unchanged when A acts on it; the matrix only rescales it by a number called the eigenvalue. In R, eigen(A) returns both, and they unlock PCA, spectral decomposition, and stability analysis. This tutorial walks through the geometry, the math, and the code, using only base R so everything runs in your browser.

How do you compute eigenvalues and eigenvectors in R?

The fastest way to get comfortable with eigenvalues is to compute one. We will hand a small symmetric matrix to eigen() and read what comes back. The function returns a list with two slots, values and vectors, and once you can read that output the rest of the article is interpretation.

The list e has two named slots. e$values holds the eigenvalues sorted by absolute size, largest first: 4.618 and 2.382. e$vectors is a matrix whose columns are the matching eigenvectors, each rescaled to have unit length. So column 1 of e$vectors pairs with eigenvalue 4.618, and column 2 pairs with 2.382. Note the sign of an eigenvector is not unique; multiplying any column by -1 gives a valid eigenvector too.

Try it: Build the symmetric matrix ex_M <- matrix(c(2, 1, 1, 2), 2, 2) and report its largest eigenvalue.

Click to reveal solution

Explanation: eigen(ex_M)$values returns c(3, 1) already sorted in descending order, so the first element is the largest.

What does Av = λv actually mean geometrically?

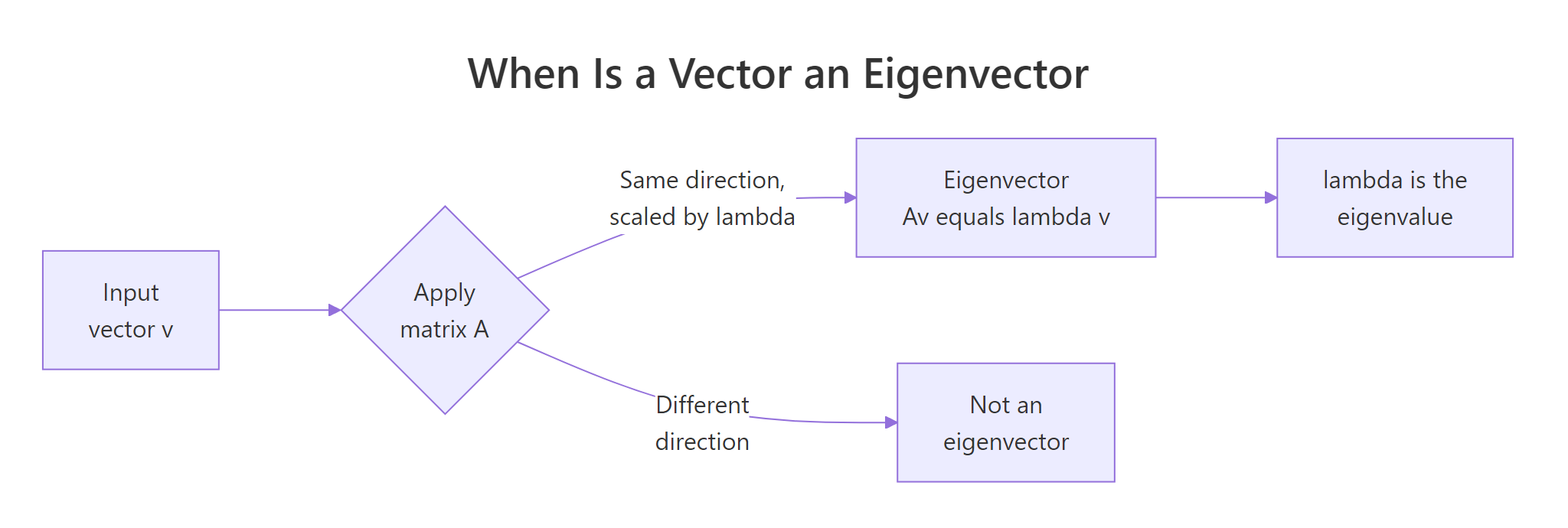

A matrix A takes any input vector v and returns a new vector Av. For most input vectors Av points in a different direction than v. Eigenvectors are the special directions where A does not rotate the vector, it only stretches or shrinks it. The stretch factor is the eigenvalue.

The defining equation is:

$$A \mathbf{v} = \lambda \mathbf{v}$$

Where:

- $A$ is a square matrix

- $\mathbf{v}$ is a non-zero vector (the eigenvector)

- $\lambda$ is a scalar (the eigenvalue)

Read it as: "applying A to v is the same as scaling v by λ." Let us check this for the matrix A and its first eigenvector from the previous block, then contrast with a non-eigenvector w to see what "different direction" looks like.

A %*% v1 and lambda1 * v1 produce identical column vectors, so v1 truly is an eigenvector and lambda1 is its eigenvalue. The non-eigenvector w = (1, 0) maps to (4, 1), that is not a scalar multiple of (1, 0), so w is rotated by A, not just stretched. Eigenvectors are the rare "stretch-only" directions.

Figure 1: When applying A leaves a vector's direction unchanged, that vector is an eigenvector.

Try it: Verify that the second eigenvector of A also satisfies A v = λ v. Save the difference A v - λ v to ex_diff (it should be near zero).

Click to reveal solution

Explanation: Subtracting lambda2 * v2 from A %*% v2 gives the zero vector (up to floating-point noise), confirming the second pair satisfies the eigenvalue equation.

How do you read what eigen() returns?

The list returned by eigen() is small but worth dissecting once. values is a numeric vector (or complex if needed). vectors is a square matrix whose columns are the eigenvectors, each normalised to unit length. For symmetric A, those columns are also mutually orthogonal, a property we will lean on heavily for PCA and spectral decomposition.

colSums(V^2) returned c(1, 1), confirming each eigenvector has length 1. The Gram matrix V'V is the 2x2 identity, which means the two eigenvectors are perpendicular. For any symmetric matrix this is guaranteed by the spectral theorem; for non-symmetric matrices the eigenvectors generally are not orthogonal, so do not assume it.

symmetric = TRUE when you know A is symmetric. R uses a faster, more numerically stable algorithm and avoids tiny imaginary parts caused by floating-point noise. Wrapping a known-symmetric matrix as eigen(A, symmetric = TRUE) is a free win.Try it: Build a 3x3 covariance matrix ex_S from random data and verify its eigenvectors are orthonormal, the off-diagonals of t(V) V should be near zero.

Click to reveal solution

Explanation: The covariance matrix is symmetric, so its eigenvectors are orthonormal. t(V) %*% V produces the identity, with off-diagonals exactly zero up to numerical precision.

When does eigen() return complex numbers, and is that a problem?

A non-symmetric real matrix can have eigenvalues that are complex numbers, and they always come in conjugate pairs (a + bi and a - bi). Geometrically, complex eigenvalues mean the matrix mixes rotation with stretching, which has no purely real direction it leaves alone. The classic example is a 90-degree rotation matrix.

The eigenvalues are 0 + 1i and 0 - 1i, pure imaginary, magnitude 1, phase ±π/2. That matches geometry: rotation by 90 degrees leaves no real vector pointing the same way, but in complex space the rotation is a multiplication by i. Mod() returns the magnitude and Arg() the phase, two helpers you will reach for whenever complex output appears.

isSymmetric(A) before passing symmetric = TRUE. Round-off in user-built matrices is a frequent culprit.Try it: Build the matrix matrix(c(0, 2, -2, 0), 2, 2) (a scaled rotation) and confirm its eigenvalues are a complex conjugate pair.

Click to reveal solution

Explanation: Scaling the rotation by 2 multiplies the magnitudes of the eigenvalues by 2, so the pair becomes ±2i. The matrix is still asymmetric, hence still complex.

How does eigen() power PCA?

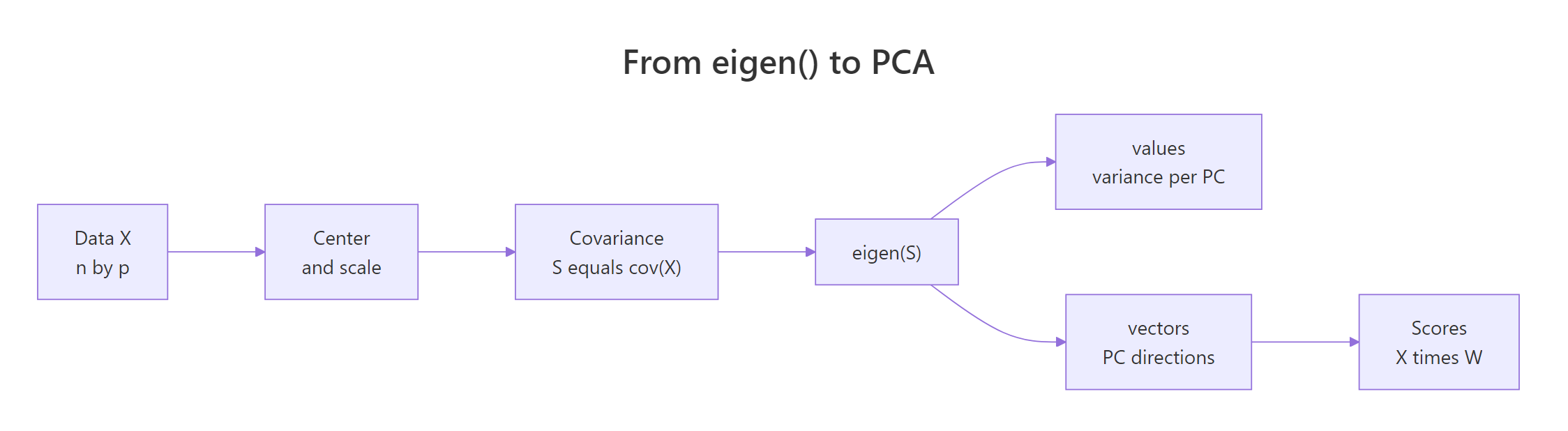

Principal component analysis finds the directions in which your data varies the most. Those directions are exactly the eigenvectors of the covariance matrix of the (centred) data, and the eigenvalues are the variances along each direction. Once you see this, PCA stops looking like magic, it is just eigen() applied to cov(X).

Figure 2: The pipeline from raw data to principal components, with eigen() at its core.

The pipeline is: take your data matrix X, centre and scale it, compute the covariance matrix S, call eigen(S), and read off the principal directions. Let us run it on the four numeric columns of iris.

The first eigenvalue (2.918) accounts for about 73% of the total variance, the second adds another 23%. So PC1 plus PC2 together capture 95% of the variation in iris, that is the rationale for the famous 2D iris scatter plot. The third and fourth components contribute almost nothing, which is why you can safely drop them.

To turn the eigenvectors into actual PC scores, multiply the centred data by the eigenvector matrix. That is exactly what prcomp() does internally.

Our hand-rolled scores match prcomp()$x row for row. The full chain, scale, covariance, eigen, multiply, is prcomp() minus a few conveniences. If your scores ever differ by a column-wide sign flip, that is normal: eigenvectors are unique up to sign, and different solvers pick different conventions.

prcomp() calls svd(), not eigen(), for numerical stability. SVD operates on the data matrix directly, which avoids forming the covariance matrix and squaring its condition number. For teaching and small datasets eigen() is fine; for production, prefer prcomp().Try it: Compute the proportion of variance explained by PC1 alone for the iris covariance and save it to ex_pc1_var.

Click to reveal solution

Explanation: The proportion of variance is each eigenvalue divided by the sum. PC1's eigenvalue is the largest, and dividing it by the total gives 0.7296, about 73% of the variance.

What is spectral decomposition and how do you reconstruct A?

For any symmetric matrix A, the spectral theorem says you can factor it into three pieces: an orthogonal eigenvector matrix V, a diagonal eigenvalue matrix D, and the transpose V'. This is the spectral decomposition.

$$A = V D V^{\top}$$

Where:

- $V$ is the matrix of eigenvectors stacked column-wise (orthonormal, so $V^{\top}V = I$)

- $D$ is the diagonal matrix with eigenvalues on its diagonal

- $V^{\top}$ is the transpose of $V$

This factoring is more than algebraic neatness. It lets you replace any matrix function f(A) with V f(D) V', and f(D) is trivial because D is diagonal. Powers, square roots, exponentials, inverses all collapse into operations on a list of numbers.

A_recon reproduces the original A exactly. The takeaway: the eigenvalues and eigenvectors carry all the information in A. They are a coordinate change into the matrix's natural axes, plus a scale per axis.

Now apply the trick to compute the matrix square root, a matrix Asq such that Asq %*% Asq = A. Take the square root element-wise on D, sandwich it between V and V'.

Asq %*% Asq recovers the original A, so Asq is a valid square root. The same recipe works for A^k (use D^k), exp(A) (use exp(D)), and the inverse A^{-1} (use 1/D, provided no eigenvalue is zero). Spectral decomposition is the engine behind matrix functions in numerical software.

A^k, exp(A), or A^{1/2} in a paper, the implementation is almost always: decompose, transform the eigenvalues, recompose. The "hard" part of the work is the one call to eigen().Try it: Compute the inverse of A via spectral decomposition (V diag(1/values) V') and confirm it matches solve(A).

Click to reveal solution

Explanation: Inverting a symmetric matrix is V diag(1/values) V'. The result agrees with solve(A) to six decimal places, confirming the spectral inverse is correct.

Practice Exercises

These capstones combine concepts from multiple sections. Use distinct variable names (cap_*, pca_loadings) so you do not overwrite the tutorial state.

Exercise 1: Largest eigenvalue of a random covariance

Generate a 100x3 matrix of standard normal samples with set.seed(11), compute its sample covariance, then report the largest eigenvalue and the proportion of variance it explains. Save the proportion to cap_var1.

Click to reveal solution

Explanation: With 100 observations and 3 columns, the first eigenvalue captures roughly a third of the variance. The exact number depends on the random seed; about 0.33–0.4 is typical.

Exercise 2: Reproduce prcomp loadings via eigen()

Compute the rotation matrix from prcomp(iris[, 1:4], scale. = TRUE)$rotation two ways: (1) call prcomp() directly, (2) build it from eigen(cor(iris[, 1:4]))$vectors. Save the eigen-based loadings to pca_loadings. Account for column-sign ambiguity by flipping signs to match.

Click to reveal solution

Explanation: cor() of unscaled iris equals cov() of scaled iris, so eigen(cor(...))$vectors matches prcomp(..., scale. = TRUE)$rotation up to per-column sign. The sign-flip loop aligns the conventions.

Exercise 3: Compute A^10 the spectral way

Take cap_A <- matrix(c(2, 1, 1, 2), 2, 2). Compute cap_A %^% 10 two ways: (1) loop multiply 10 times, (2) spectral way V diag(values^10) V'. Confirm both methods agree.

Click to reveal solution

Explanation: Both routes give the same matrix. For k = 10 the loop is fast, but for k = 10000 the spectral version stays O(1) in the exponent while the loop is O(k), that is why eigendecomposition is the standard way to compute matrix powers.

Complete Example: PCA on mtcars from scratch

End-to-end pipeline: pick four mtcars columns, scale them, build the covariance, run eigen(), project onto the first two components, plot the result, and check against prcomp().

PC1 explains 72% of the variance and PC2 another 19%, so plotting in 2D preserves about 91% of the structure. The hand-built scores_cars matches prcomp()$x for PC1 exactly and for PC2 up to a column sign flip, that is the eigenvector-sign ambiguity at work, harmless for any downstream visual or distance computation.

Summary

Quick reference for everyday work:

| Goal | Code |

|---|---|

| Eigenvalues only (faster) | eigen(A, only.values = TRUE)$values |

| Both, A is symmetric | eigen(A, symmetric = TRUE) |

| PCA loadings | eigen(cov(scale(X)))$vectors (or prcomp(X, scale. = TRUE)) |

| Variance per PC | eigen(cov(scale(X)))$values |

| Matrix function f(A), A symmetric | V %*% diag(f(values)) %*% t(V) |

| Spectral inverse | V %*% diag(1/values) %*% t(V) |

Three facts to keep in your head: eigenvalues are sorted by absolute value (largest first); eigenvectors are columns of e$vectors, normalised to unit length; for symmetric A, the eigenvectors are orthonormal and eigenvalues are real.

Figure 3: Where the pieces of eigen() fit together.

References

- R Core Team,

eigen()reference manual. Link - Strang, G., Introduction to Linear Algebra, 5th ed., chapter 6 (Eigenvalues and Eigenvectors). Wellesley-Cambridge Press (2016).

- Trefethen, L.N. & Bau, D., Numerical Linear Algebra, lectures 24–25. SIAM (1997).

- Golub, G.H. & Van Loan, C.F., Matrix Computations, 4th ed., chapter 7 (Symmetric Eigenproblem). Johns Hopkins (2013).

- James, G., Witten, D., Hastie, T., Tibshirani, R., An Introduction to Statistical Learning, chapter 12 (Unsupervised Learning, PCA). Springer. Link

- Hastie, T., Tibshirani, R., Friedman, J., The Elements of Statistical Learning, section 14.5 (Principal Components). Springer. Link

- Wickham, H., Advanced R, 2nd ed., notes on numerical pitfalls. CRC Press. Link

- Schlegel, A., "Eigenvalues and Eigenvectors in R" (RPubs). Link

Continue Learning

- Matrix Operations in R, the prerequisite tour of

%*%,t(),solve(),det(), and the rest of base R's matrix toolkit. - Solving Linear Systems in R, companion piece on

solve()andqr.solve()for square and overdetermined systems. - Singular Value Decomposition in R, the next decomposition to learn after

eigen(); works for any matrix, not just square ones, and is the engine behindprcomp().