Spectral Decomposition in R: Diagonalization & Powers of Matrices

Spectral decomposition factors a symmetric matrix $A$ into $Q \Lambda Q^\top$, where $\Lambda$ holds the eigenvalues on the diagonal and the columns of $Q$ are orthonormal eigenvectors. That single factorisation lets you reconstruct $A$, raise it to any power in one shot, and read off its geometric structure.

What does spectral decomposition do?

The fastest way to understand spectral decomposition is to do it. We will build a small symmetric 3×3 matrix and feed it to eigen(). The eigenvalues come back as stretching factors, and the eigenvectors come back as the directions those stretches happen along. Once you have these two pieces, every other operation in this post is a one-line follow-up. Base R has everything we need, no extra packages.

The matrix A is the symmetric product M^T M, a common way to manufacture a symmetric matrix from any starting matrix. Calling eigen(A) returns a list with two slots. The values slot is a vector of eigenvalues sorted from largest to smallest. The vectors slot is a matrix whose columns are the matching unit eigenvectors. Together they are the spectral decomposition: three numbers and three directions that completely describe how A acts on every vector in 3D space.

Try it: Pull the largest eigenvalue and its matching eigenvector out of dec. The eigenvalues come back already sorted in decreasing order, so this is a one-liner.

Click to reveal solution

Explanation: eigen() always returns eigenvalues in decreasing order, so index [1] is the largest. The matching eigenvector is column 1 of $vectors.

How do you reconstruct A from Q and Λ?

The spectral theorem says $A = Q \Lambda Q^\top$ for any real symmetric matrix. Multiplying that out reconstructs A exactly, up to floating-point noise. The reason this formula is so clean is that for a symmetric matrix, the eigenvector matrix Q is orthogonal, which means $Q^{-1} = Q^\top$. We get to use a transpose where general matrices need a full inverse.

We will plug Q and Lambda from the previous block straight into the formula. Note that Lambda is a vector, so we wrap it with diag() to get the diagonal matrix the formula expects.

all.equal() is the right tolerance check for floating-point comparisons; == would fail on the last bit of every entry. The reconstruction matches A to machine precision. That confirms the decomposition is lossless, the three eigenvalues and three eigenvectors carry all of the information in the original 9 entries of A.

crossprod(Q). For a symmetric matrix's eigenvector matrix, crossprod(Q) should equal the identity. If it does not, your input was not actually symmetric (a common bug when matrices were constructed from data with rounding).Try it: Compute crossprod(Q) and round it to 6 decimal places. The result should be the 3×3 identity matrix.

Click to reveal solution

Explanation: crossprod(Q) is shorthand for t(Q) %*% Q. For an orthogonal matrix, this is the identity by definition. Rounding hides the tiny floating-point drift that would otherwise show up in the off-diagonal entries.

How do you compute matrix powers in one shot?

This is the result that makes spectral decomposition genuinely useful. Once you have $A = Q \Lambda Q^\top$, raising A to any integer power k is

$$A^k = Q \Lambda^k Q^\top$$

and because $\Lambda$ is diagonal, $\Lambda^k$ is just the element-wise power on the diagonal, no matrix multiplication at all. That turns k - 1 matrix multiplies into one element-wise vector power plus two matrix multiplies, regardless of how big k is.

To see the payoff, we will compute $A^{10}$ two ways. First the naive way: chain ten copies of A together with Reduce() and %*%. Then the spectral way.

Reduce() walks left to right multiplying ten copies of A. We picked 10 here because it is small enough to read but big enough that the entries blow up, eigenvalues greater than 1 dominate quickly.

The two approaches agree to floating-point precision. The spectral form does the same job in three operations regardless of whether k is 10 or 10,000. That speedup matters most for fractional powers ($A^{1/2}$, the matrix square root) and negative powers ($A^{-1}$, the inverse), both of which become element-wise on the eigenvalues.

k underflows to 0 and an eigenvalue greater than 1 raised to a large k overflows to Inf. If your matrix has eigenvalues spanning many orders of magnitude, work with log(Lambda) * k and exponentiate carefully, or use a numerically aware library like expm.Try it: Compute $A^3$ via spectral decomposition and verify it matches A %*% A %*% A.

Click to reveal solution

Explanation: Same formula as A^10, just with Lambda^3 on the diagonal. The cost is identical regardless of the exponent.

Does spectral decomposition work for non-symmetric matrices?

Yes, but with one important change. For a general (diagonalisable) matrix B, the formula becomes

$$B = V D V^{-1}$$

where the columns of V are still eigenvectors but they are not orthogonal anymore. That means we cannot replace $V^{-1}$ with $V^\top$, we have to compute the actual inverse with solve(). The matrix powers shortcut still works:

$$B^k = V D^k V^{-1}$$

Let's see this in action with an asymmetric matrix.

crossprod(V) is clearly not the identity, which proves V is not orthogonal. So the symmetric trick t(V) will not give us the inverse. We have to use solve(V).

Both checks pass. The decomposition still works, we just paid the cost of one explicit inverse instead of getting it for free from a transpose.

eigen() automatically returns complex types in that case, and the same V D V^{-1} formula still works, just with complex arithmetic. If you reconstruct and the imaginary parts are tiny noise, drop them with Re() after verifying.Try it: Compute $B^2$ via spectral decomposition and verify it matches B %*% B.

Click to reveal solution

Explanation: The non-symmetric formula is V D^k V^-1. We square the eigenvalues element-wise on the diagonal and use solve(V) for the inverse since V is not orthogonal here.

Practice Exercises

These capstone problems combine the concepts from above. Use distinct variable names with the my_ prefix so your work does not overwrite the tutorial state.

Exercise 1: Symmetric A^5 two ways

Build the symmetric matrix my_S <- crossprod(matrix(c(1, 2, 1, 0, 1, 1, 2, 0, 1), nrow = 3)). Compute my_S raised to the 5th power two ways: a matrix-multiply chain in my_pow_slow and a spectral computation in my_pow_fast. Verify they agree.

Click to reveal solution

Explanation: my_S is symmetric (built from crossprod), so the orthogonal-Q form applies and we use t() instead of solve(). Element-wise power on the eigenvalues replaces five matrix multiplies.

Exercise 2: Markov chain stationary distribution

Build the 2-state transition matrix my_P <- matrix(c(0.7, 0.3, 0.2, 0.8), nrow = 2, byrow = TRUE) (row 1 sums to 1, row 2 sums to 1). Decompose it, raise it to the 100th power spectrally, and read the stationary distribution off any row. The stationary distribution is the long-run probability of being in each state.

Click to reveal solution

Explanation: my_P is not symmetric, so we use solve(). After many steps every row of $P^{100}$ converges to the same row vector, that vector is the stationary distribution. We wrap with Re() because numerical drift can leave a tiny imaginary part.

Complete Example: Markov chain steady state via diagonalisation

Here is the workflow end-to-end. We will model weather transitions in three states (Sunny, Cloudy, Rainy), find $P^{50}$ via spectral decomposition, and confirm the steady-state distribution two different ways. The transition matrix is non-symmetric, so this is the V D V^-1 form we just learned.

The largest eigenvalue is exactly 1, every valid transition matrix has this property because rows sum to 1. The other eigenvalues are all less than 1 in absolute value, so their powers shrink to zero. After enough steps, only the eigenvalue-1 component survives, and that survivor is the steady state.

Every row is identical. That tells us the Markov chain has fully converged: starting from any initial state, the probability of being in Sunny / Cloudy / Rainy after 50 steps is the same. We can read the stationary distribution pi straight off any row.

The fixed-point equation $\pi P = \pi$ holds, which is the defining property of a stationary distribution. The chain spends about 56% of its time in Sunny, 30% in Cloudy, and 14% in Rainy, and we got there by raising the transition matrix to a high power in three lines, no simulation required.

Summary

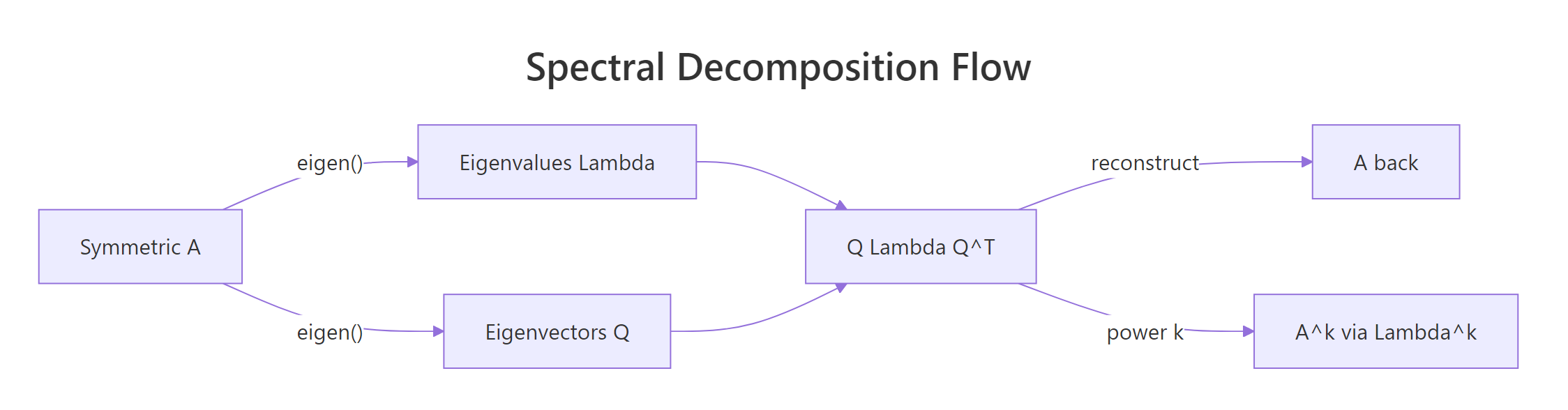

Figure 1: Spectral decomposition turns a symmetric matrix A into three pieces, Q, Λ, Qᵀ, that recombine to give back A or any of its powers.

| Concept | Formula | R idiom |

|---|---|---|

| Symmetric reconstruction | $A = Q \Lambda Q^\top$ | Q %*% diag(Lambda) %*% t(Q) |

| Matrix power (symmetric) | $A^k = Q \Lambda^k Q^\top$ | Q %*% diag(Lambda^k) %*% t(Q) |

| Non-symmetric reconstruction | $B = V D V^{-1}$ | V %*% diag(D) %*% solve(V) |

| Markov stationary distribution | row of $P^k$ for large $k$ | any row of V %*% diag(D^k) %*% solve(V) |

The single mental model: spectral decomposition = independent stretches along independent axes. Reconstruction is regrouping those pieces. Powering is element-wise on the diagonal of stretches. Symmetric matrices buy you a free transpose where general ones need a real inverse.

References

- R Core Team.

eigen, Spectral Decomposition of a Matrix. R base documentation. Link - Wikipedia. Eigendecomposition of a matrix. Link

- Friendly, M. matlib vignette: Eigenvalues and Spectral Decomposition. Link

- Wilkinson, R. MATH3030 Multivariate Statistics §3.2: Spectral / eigen decomposition. Link

- Strang, G. Introduction to Linear Algebra, 5th edition. Wellesley-Cambridge Press (2016). Chapter 6: Eigenvalues and Eigenvectors.

- Trefethen, L. N., and Bau, D. Numerical Linear Algebra. SIAM (1997). Lecture 24: Eigenvalue Algorithms.

Continue Learning

- Eigenvalues & Eigenvectors in R: eigen(), Why They Power PCA & More. The prerequisite for this post: what

eigen()returns, what eigenvalues mean, and how PCA uses them. - Solving Linear Systems in R: solve(), qr() & Least Squares Solutions. The companion factorisation topic, LU and QR decompositions for solving $Ax = b$.

- Matrix Operations in R: Create, Multiply, Invert & Transpose for Statistics. Base R matrix algebra primer if

%*%,t(), orsolve()still feel new.